Applications have been tied to the operating system for as long as we can remember. And why shouldn't they be? The operating system is after all the way applications can interface with the underlying harware. Something has to talk to the disk and the network card. The end user has to interact with the end application via the keyboard or the monitor right?

The First Abstraction

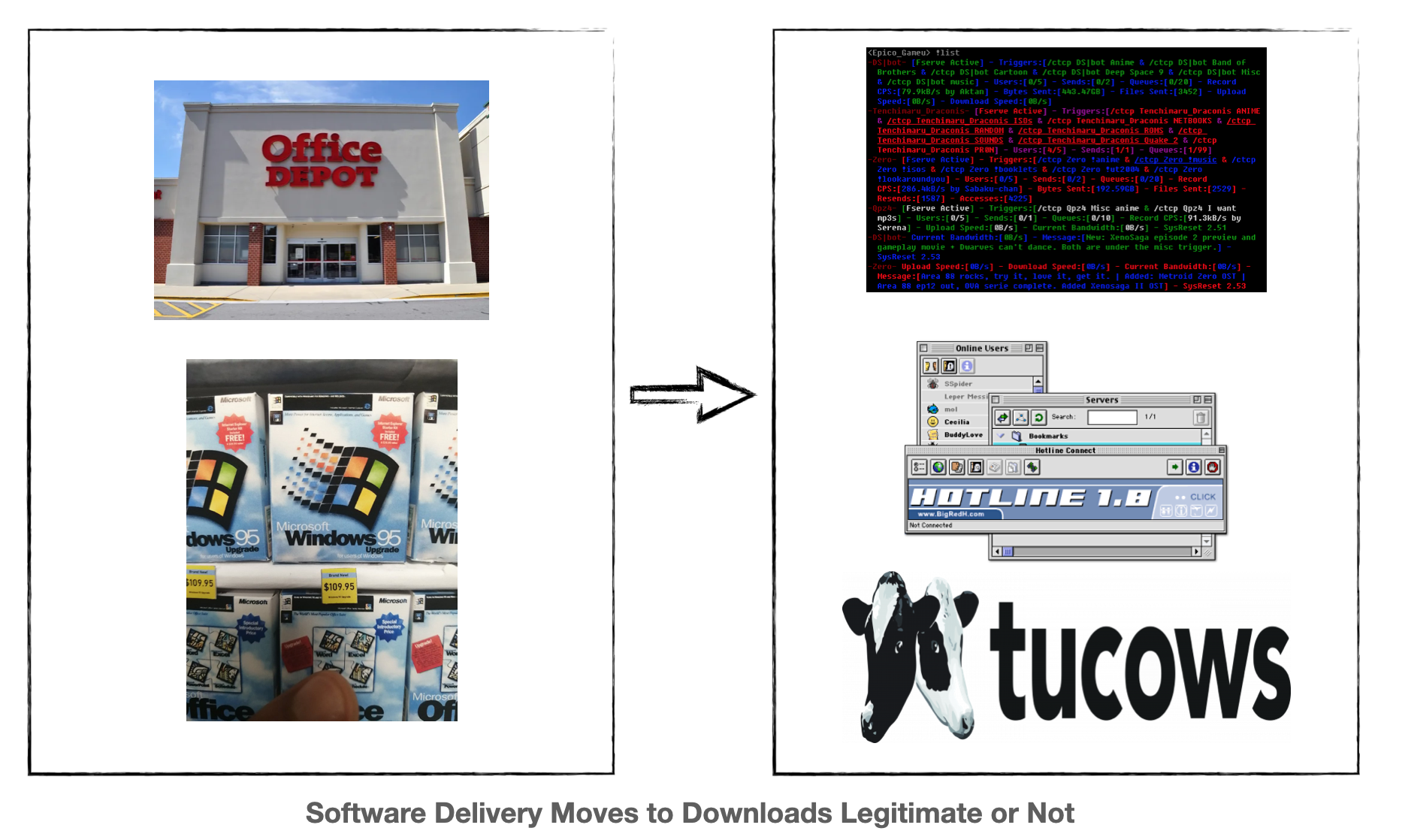

The browser via the internet was the first abstraction although during the 90s it wasn't readily apparent as desktop software still reigned supreme. SaaS would not really arrive for another 15 to 20 years later, Benioff’s infamous protest in 2000 withstanding. This was illustrated by the various file sharing networks that characterized the late 90s such as IRC fserves and later on software such as Hotline and shareware on sites such as Tucows. These of course grew out of the BBS scene from earlier and while file sharing newtorks proliferated you could already tell the dominance of the browser over competing protocols was entrenching itself.

All legitimate software was obviously distributed on cdroms and sold in places like Office Depot. While open source was most definitely a thing (and arguably a very very very different community) commercial/consumer software didn't touch it.

I point this out because both software distibution and software consumption started to change dramatically over the next few decades.

The Second Abstraction

Then something funny happened during the first few years of the third millenium, commercialized virtualization was brought to the fore. While various forms of virtualization had been around for a while it really wasn't commercialized to the extent of what happened at this point.

Simultaneously our hardware gained commodity SMP support - that is the ability to run multiple processors with multiple cores -- again a capability that had been around for a bit but hadn't really become ubiquitous enough to be consumer-grade. With that followed true performant multi-threading on free operating systems such as Linux. I still remember in school being told that threads were horrible -- and that was true at a certain point in time but that started to change.

Only a few years later the cloud was born erupting from Jeff Bezos forehead in full battle armor as an API over virtualization. The public clouds are still screaming along at break neck speeds. Today AWS sports over 200 various services and that is just AWS - not counting Google nor Azure nor any of the others.

Virtualization had finally freed engineers from interfacing directly with hardware.

SaaS On

Once the cloud was in full swing a new cycle began with the saasification of everything. All that software that was being sold in Office Depots or for higher ticket items, via boots-on-the-ground sales, started appearing as a website. Individual software applications that might once have been downloaded now appeared as a single pane website.

One could be forgiven if they only noticed distribution and accessibility had changed but other things under the covers had taken affect too.

Software that might have been installed on a consumer facing, real piece of hardware running Windows, was now being served up on virtual machines, running in the cloud, on a free, open source operating system known as Linux. While the core of the software was obviously written by engineers at the company producing it, vast swaths of the component pieces were third party pieces of software that were freely available for use.

Whether it was databases such as mysql and postgresql, or webservers such as apache and nginx the proliferation of freely available third party software in use by large companies, but not written by them, accelerated.

Just to key in on this - it was no longer a single application sitting on a computer serving up a particular business function. It was a website composed of many different applications running on many different virtual servers serving up a particular business function.

This might seem like a trivial observation but it sets the stage for ....

The Second Coming of Virtualization

While the clouds abstract away various parts of the real hardware unikernels abstract away various parts of the operating system - no longer constrained by actual real hardware Indeed we recently coded support for a new architecture that we didn't even have real hardware to run on!!

So it wasn't just hardware. It was concepts that simply didn't make sense in a world where a single application might not even fit on a few virtual machines.

Imagine going to the arcade around 1991 or 1992. You have hundreds of arcade machines to choose from after filling up your pockets with quarters. Each arcade machine, at this point in time, offers you one game per actual machine. You can choose Mortal Kombat or you can choose Street Fighter 2 but if you want to play one you are interfacing with a different machine with a different screen and a different joystick.

Today I can choose thousands of those on an emulator like Mame. Indeed, I can even play modified versions of these games that have different levels, different characters, different difficulties, etc. I illustrate this because it shows you the wide possibilities that open up when you not just virtualize the system but also the actual end application. Keep in mind - in cloud 1.0 the "application" is actually Linux and whatever applications you run on it - the virtual machine.

It is one thing to virtualize the machine and get certain benefits but once you have done that, it opens up the capability to make completely different applications as well. You don't have to play by the old rules anymore that were designed in the early 1970s -- rules that were designed for real computers.

There has been quite a lot of attention paid to ecosystems like VDI, but no attention was paid to the server-side of the house which is where most all software is being produced and resides today.

The scale of the server-side ecosystem probably wouldn't catch your attention if you weren't a software engineer but the mind boggles at the sheer size of it. We're not talking about companies like Redhat that work as 'server-side operating system' companies. We're talking about companies Like MongoDB or Confluent or Databricks. Software, predominantely open-source or at least source available that was written to run on server operating systems.

Apps on One Server or One VM

Your modern software "application" is not just one application. Most companies simply can't fit their software on a single server anymore. Whether it is choice of language, number of engineers involved, or expected number of users/customers we blew past the whole "one operating system to manage many applications" a long long time ago.

If you spin up a linux instance virtualized ala ec2.small you might run your application on top. Then you might install monitoring, logging and such. More than likely you are not installing the database that it talks to on that same vm. You might have a load balancer splitting traffic between multiple copies of the same virtual machine.

You see your typical developer might be running their software on tens or hundreds or even thousands of virtual machines. The problem is that they still treat each individual virtual machine as an operating system running on an individual computer which is not the case. The cloud native folks came in and created another abstraction on top of the abstraction that already exists but now they have to manage three layers: the virtualized guest operating system, k8s, and the application.

The reality is that the cloud is the operating system. Unikernels un-encumbered from both hardware and general purpose operating systems allow the end user to treat those tens to hundreds of virtual machines as simply applications and not have to micro-manage each individual one. They then get the further benefit that all the actual real operating system layers are fully managed by Amazon, Google and others tasked with ensuring hundreds of warehouse-scale computers stay up.

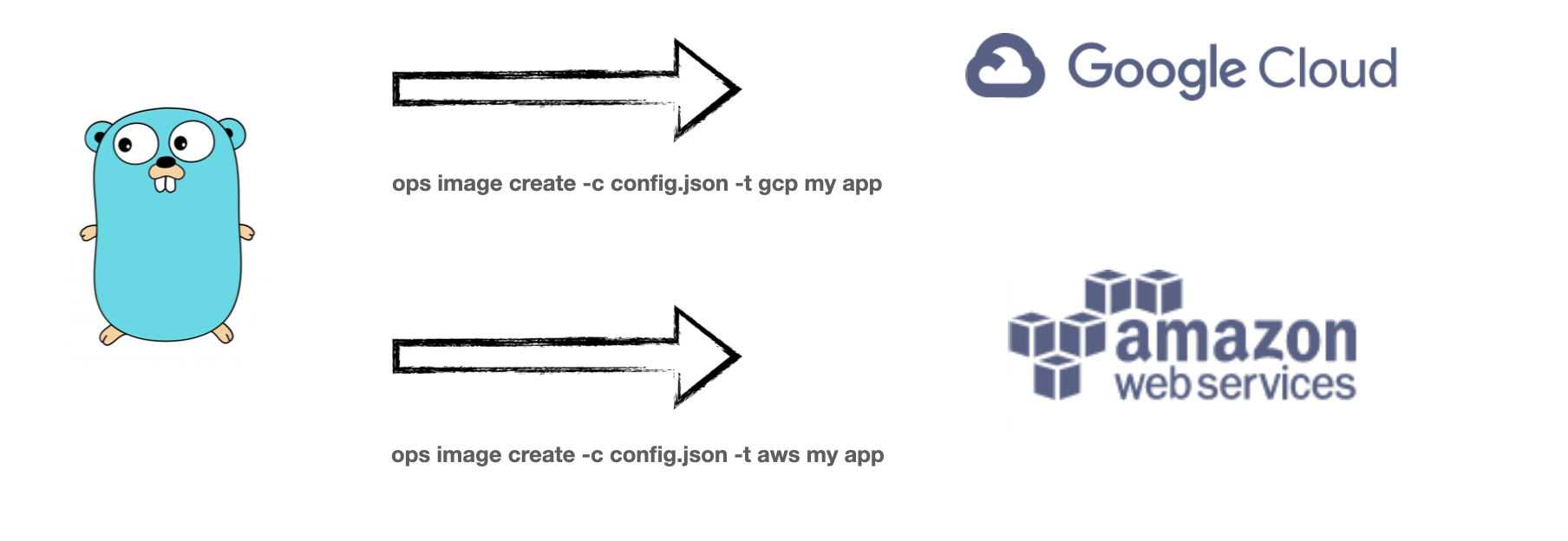

All the pundits that have long predicted multi-cloud deploys can now be satisified as you can spin up the same unikernel on both AWS and GCP and Azure in the same amount of time and it's the same application. You don't have to run terraform. You don't need to figure out the difference between AKS vs EKS vs GKE. Within tens of seconds across 'image create' and 'instance create' you are deployed. The underlying infrastructure is slightly different because instance types and hypervisors on each cloud are going to perform and behave slightly different but the primitives of the vm, the nic, the vpc, etc - that's all the same.

All the pundits that have long predicted 'serverless' now have a true cross-cloud solution with many missing features of the current solutions (such as persistence) included.

Even all the angst generated in the past few months about the state of Heroku and platforms like it start to disappear as you get the same sort of guarantees, look/feel at better price points.

Nothing is Safe

In this world there is no software on the server-side that is safe from disruption. Logging, monitoring, databases, queues, webservers -- every established sector now has the capability of being refactored, reinvented, reenvisioned and made faster, safer, stronger, and better than the status quo.

It isn't even something that I could see our company alone handle - after all each of those application sets I just mentioned are established industries in their own right measured in tens if not hundreds of billions of dollars each. Merely deploying many of these software applications as-is with no code changes already nets you various performance and security improvements, not to mention the fact that management costs go way down.

A handful of years ago one of the larger conversations amongst companies like Mongo and Elastic were fighting against Amazon ripping them off, however, now they have to realize that a unikernelized version of the same software can be managed by yet another third party and win on the improvements alone. Sprinkle a little added value sauce on top and you have a perfect storm for market mahyem. I just googled for elastic hosting and there is at least a dozen offerings for that alone.

But that's not all folks - we're talking about un-modified applications here. What happens when you create a fork like KeyDB for instance that adds multi-threading to something like Redis? There are many many cases such as this where tiny little changes make an application so much better deployed as a unikernel. Then of course you have the applications and languages that are 25-30 years old, designed for systems and machines from the mid 90s sitting like ducks in a pond.

I haven't discussed what happens when we get newer languages that are more amenable to this new universe of software. One of my personal watchpoints I keep is the influence of isolates on scripting languages. Concepts like JVM virtual threads are going to be a real gamechanger too.

I think there is going to be a veritable explosion of companies operating in the unikernel space as there is just way too much TAM and way too much opportunity. If you are working on something in the space reach out - we might be able to help you.

Stop Deploying 50 Year Old Systems

Introducing the future cloud.